Image generated by ChatGPT

An analogy keeps coming to mind as I work, talk, and write about AI tools, and it links using AI tools to parenting. Parenting can be exhausting and working with AI can, also, be exhausting. Don’t get me wrong, the two types of ‘exhausting’ are not equal, but sometimes when working with AI tools, I feel like I am raising another child.

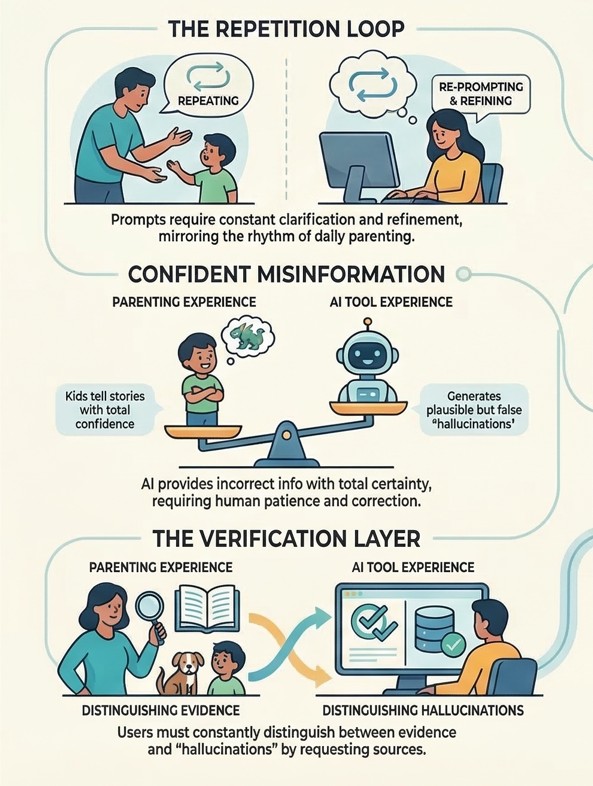

I have three children, so I am well-versed in needing to repeat myself. Anyone who has raised children or is still raising them knows there is a rhythm to daily interactions. You say something to your kid/s. Then you repeat yourself. Then again. And maybe another time, as you start to either raise your voice or question whether you are, somehow not being heard, or being intentionally ignored.

Working with AI tools can sometimes feel similar.

You write a prompt.

You clarify it.

You refine it again (and again).

AKA you repeat yourself.

Sometimes you get a response that is appropriate or useful. Sometimes you don’t.

Sometimes the issue is ours, in which case we communicated our desired outcomes ineffectively in the prompt. Other times, it is an issue with the ‘tool’, whereby the system produces text that doesn’t reflect what you wanted and has little value. Worse still is when the tool produces the latter outputs with confidence.

And this can feel like parenting.

Children can respond with enormous confidence. They can have the utmost confidence, even when they are talking about something they know very little about. Many of my days, I encounter my kids telling me something with such confidence, with their certainty being extended to topics that they may know nothing about. I always take a deep breath when the conversation starts with, “Mum, did you know?” because sometimes I might learn something new. Many other times, however, the information presented is partly accurate or outright incorrect. In some circumstances, I respond with a ‘hmmm’ and an ‘Did you know?’ to add some additional or clarifying information. Sometimes I tell them straight out that that information is incorrect. But regardless of the topic, it is interesting to note their confidence; the confidence in the information they share is fascinating.

AI tools can behave similarly. They respond quickly, and with a level of confidence that can make the output sound accurate, even when it is wrong. And AI tools can get it wrong and be very wrong.

And it is here again that I take a deep breath as the cognitive load of working with the tool increases. I find myself needing to take deep breaths and practise patience, particularly when I am trying to use AI tools to help me synthesise information I have already written. I frequently get outputs that include statements I know I did not write or arguments I know I have not made. The discrepancy is immediately obvious to me, which usually ignites a written response, ‘That is not correct’.

It’s not that the technology tool has failed, because it has, as intended, generated outputs. Most AI tools are optimised to be helpful and responsive. Which means that they are likely to produce a plausible response rather than to admit uncertainty in their responses. It would be a rare, a very rare, occasion that interactions would include the tool saying ‘I don’t know’, because many AI tools are not incentivised to do so, and many users do not prompt in ways that allow for it (me included).

When chatting with my kids and working with humans on their decision-making frameworks, I encourage them to pause and reflect on the sources and basis of their outputs; for them to identify the information that is informing what they are saying and writing. I ask them, ‘Do you think that, or do you know that?’ Asking this reflective question is salient because it helps decision-makers to distinguish between assumptions and evidence. Between rhetoric, research and reality.

Asking about sources and references becomes part of the prompting when working with AI tools, because it helps add another layer of checking, interpretating and validating.

A further challenge arises when the outputs are inaccurate and/or hallucinations. Now it is important to recognise that it is easy for me to identify hallucinations in these instances, because I am intimately familiar with my own intellectual property and footprint. I know what I have written, I know what I have said, and importantly, I know what I have not said. This means that I am again questioning whether I have been heard or intentionally ignored.

And here I find myself repeating myself again, as I do as a parent. I am re-prompting, checking, reviewing, and requesting references. None of this is particularly difficult work, but it does require additional cognitive effort because it involves sustained monitoring and management, as well as advising the tool when it produces incorrect outputs.

Image generated by NotebookLM

Raising Your AI

This brings me to another thought about the connection between parenting and AI tools.

You don’t need to extend any congratulations, but… my AI tools are another week older.

This is a strange way to talk about AI tools, but hear me out. When babies are born, we measure time and age differently. We talk about babies being 6 weeks old, 8 weeks old, 12 weeks old, and we note their milestones: their first smile, their first roll-over, their first steps. Progress is gradual. We get to know the baby more as their personality and capabilities emerge week after week.

I notice shifts over-time as I work more and more with AI tools. Based on its previous outputs and behaviour, I amend my prompts, and I expand my ability to judge the usefulness and accuracy of the outputs. Just with young kids, working with AI tools requires learning about what the system does well, and where it needs to improve. And just like parenting, the milestones associated with gaining a more mature/useful AI tool are accompanied by moments of frustration, correction and recalibration.

And this is where decision-makers benefit from acknowledging the hidden work associated with parenting AI. While much of the talk about AI tools focuses on what they can do, we benefit from understanding that adding more AI tools to our workflow doesn’t automatically result in efficiencies or accurate outputs. In some cases, it multiplies the number of outputs that we humans must review and judge.

The connection between AI tool use and management is increasingly recognised, as evidenced by a recent Harvard Business Review article, “When Using AI Leads to Brain Fry”. The authors call out this growing tension and note, “AI promises to act as an amplifier that will drive efficiency and make work easier, but workers that are using these AI tools report that they are intensifying rather than simplifying work.” Introducing the notion of ‘AI Brain Fry’ – mental fatigue that results from excessive use of, interaction with, and/or oversight of AI tools beyond one’s cognitive capacity – the authors, point out that there are potentially negative decision-making and business implications associated with AI tools.

From my perspective, the organisations that will benefit from AI tools will be those that design their systems to consider the interrelationship between humans and technology, and understand that some ‘parenting’ time is required. You will benefit from building in time for people to learn how to work with these tools, and time to ‘raise’ their AI tools from baby to toddler to school age, so that the tool’s outputs are more stable and the interactions are more predictable.